1,959 Stories

I forgot the character for “horse” three times in one week.

Not a complex character. Not some obscure radical buried in a classical text. 马. Six strokes. One of the first hundred you learn. I'd studied it, reviewed it, written it out by hand. And still, on Wednesday morning, staring at a flashcard, my brain served up nothing. Just white space where a horse should have been.

This is the central problem of learning Mandarin as an adult. The characters don't stick. You learn thirty in a week, forget twenty by Friday. Anki helps, but Anki is a blunt instrument. It knows when to show you a card again. It doesn't know how you remembered it, or why you forgot it, or what confused you. It treats every character like every other character: a fact to be drilled until it survives.

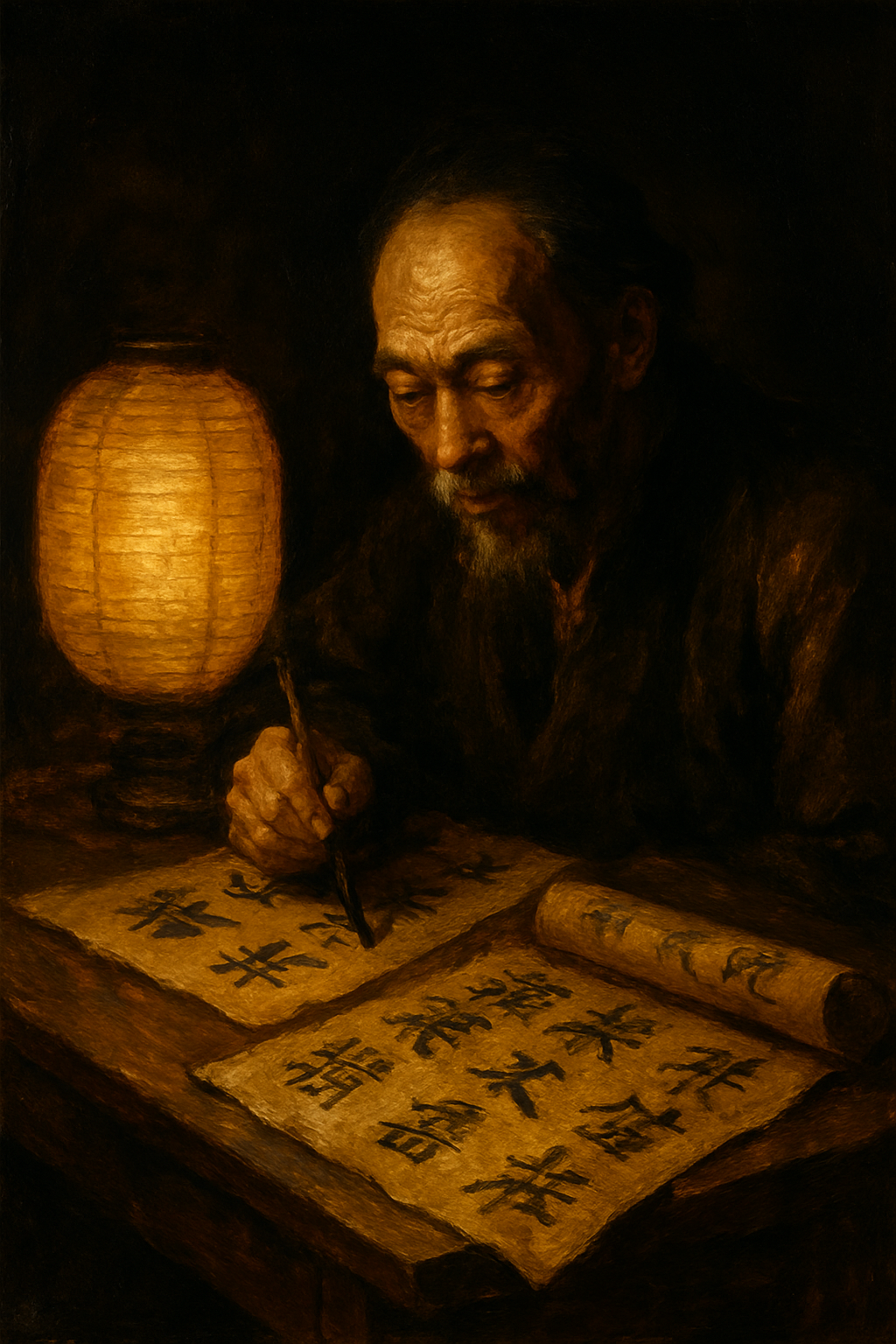

The Mandarin Blueprint method works differently. Every character becomes a scene in a movie playing inside a building you already know. An actor performs an action with a prop in a specific room. 马 isn't an abstract shape. It's Sean Connery kicking a toy horse across the kitchen of your childhood home. The pinyin maps to the actor, the tone maps to the room, the meaning maps to the action. You don't memorize the character. You watch the scene and the character reassembles itself in your mind.

The problem: no existing SRS tool understood any of this.

Anki showed me flashcards. It had no concept of actors, sets, rooms, or props. I couldn't search for “every character where Brendan Lee is the actor” or “every story set in my parents' gaff.” The mnemonic system lived in my head and on Traverse.link. The review system lived in Anki. The two never talked to each other.

So I built the bridge.

Mandarin Scaffold runs on Django and HTMX. It holds 1,959 stories across 67 levels, each one a complete movie scene: the character, its pinyin, its keyword, the actor who performs the action, the set where it happens, the room within that set, the prop that carries the meaning, and the story text that stitches it all together. I scraped the stories from Traverse, parsed the JSON, mapped actors to phonetic components, mapped sets to tone positions, and loaded the whole system into a SQLite database that fits in my pocket.

The heart of it is SM-2, the same spaced repetition algorithm that powers Anki. But wrapped in context. When I review a card, I don't just see the character and guess the meaning. I see the full scene: the actor, the set, the room, the prop. I can rate my recall from Again to Easy, and the algorithm adjusts the interval. A card I nail gets pushed out four days, then ten, then twenty-six. A card I fumble comes back in the same session.

The keyboard shortcuts make sessions fast. Space to reveal, 1 through 4 to grade, N for next, C for confused. No mouse. No clicking through menus. Just the character, the scene, and a number.

Then the features started accumulating. Sentence cards with cloze deletion: a full Chinese sentence with the target character blanked out, forcing recall in context rather than isolation. A deep dive mode where I can drill into a character, see similar characters that confuse me, generate example sentences through the OpenAI API, and save the ones that help. Level paragraphs that compose sentences using only the characters from a single level, so I can read something coherent instead of reviewing isolated fragments.

The AI integration started small. Claude Haiku generates example sentences for each character, three per hanzi, graded by difficulty. The system tracks token usage and cost per call, with hard limits, because I've seen what happens when you let an API run unmonitored.

589 hanzi have props now. 550 have the actual Mandarin Blueprint mnemonic props mapped. The data is getting dense enough that patterns emerge. I can see which actors I confuse, which sets share too many similar stories, which rooms need stronger visual anchors. The system is starting to teach me things about my own memory that I couldn't see from inside the learning process.

There's a statistics dashboard. Review history. Ease factor trends. Cards due by day. The kind of data that turns language learning from a feeling into a measurement.

I still forget characters. That hasn't changed. But now when I forget 马, I know why. The scene wasn't vivid enough. The prop was generic. The room was crowded with other characters that share the same actor. Scaffold shows me these patterns. It shows me the last time I reviewed, how I graded it, how the ease factor has drifted over time. Forgetting stops being a failure and starts being data.

Building your own learning tool is an odd kind of discipline. You spend as much time on the tool as on the thing you're learning. Some nights I'd catch myself optimizing a database query when I should have been reviewing level 14. But the tool compounds. Every hour I spend on Scaffold saves me minutes across thousands of future reviews. And the engineering itself reinforces the learning: I think about character structure while designing data models, about memory while writing algorithms, about cognition while building interfaces.

1,959 stories. 67 levels. One SQLite database. The characters are in there, waiting. Every morning I open the dashboard, see what's due, and start reviewing. The scenes play. The actors perform. The props do their work. And slowly, one review at a time, the characters stop being shapes and start being words.

The horse hasn't come back to haunt me in months.