Broadcasting to an Audience of One

The first morning it worked, I stood in my kitchen in Kitsilano holding a coffee I'd forgotten to drink. A voice I hadn't recorded was reading me the weather, the tides, and how many people were currently orbiting the Earth. It told me Liverpool play at noon, that I should bring a jacket, and that the sunset would hit 8:14 PM. Then it stopped. Forty-seven seconds of audio. I played it again.

I built a radio station for one listener. It runs on a Raspberry Pi, costs $1.29 a month, and broadcasts every morning at 7:00 AM to nobody but me.

The Problem With Smart Speakers

I used to ask Alexa for my morning briefing. If you've tried this, you know the drill. You get a news summary you didn't ask for, a weather report for the wrong part of the city, and a “fun fact” about pangolins. Optimized for engagement, not utility.

What I wanted was simple. Weather, tides, calendar. Delivered like a BBC Radio 4 newsreader who respects my time and doesn't try to make me smile. No jingles. No “and here's something interesting.” Just the facts, read well, in under two minutes.

So I built it.

The Stack Nobody Asked For

RaspberryFM is a Python script that wakes up via cron at 7:00 AM Pacific. It reaches out to a handful of sources, assembles a script, voices it, and emails me the transcript with a cost breakdown. The whole pipeline runs in about forty seconds.

Weather comes from Open-Meteo, which is free and accurate. Sunset times I calculate locally. Google Calendar events pull through the API. Sports fixtures for Liverpool, Leinster Rugby, and UFC numbered events get fetched and converted from GMT to Pacific.

Then there are the weird ones.

For tides, I scrape tideschart.com. For the space crew count, I scrape whoisinspace.com. Neither of these sites has an API. And this is where the project got interesting.

AI as a Parser

The conventional approach to web scraping is brittle. You inspect the page, find the CSS selectors, write a parser, and pray the site never changes its markup. I've written dozens of these. They break constantly. A single class name change and your pipeline dies at 6:58 AM while you're still asleep.

I tried something different. I feed the raw HTML to GPT-4o and ask it to extract what I need.

For tides, the prompt is straightforward: here's the HTML for Kitsilano Beach, tell me when the tide crosses 2 meters today and whether it's rising or falling. GPT reads the table, understands the context, and returns structured data. If the site changes its layout tomorrow, the model will still understand a tide table.

I originally used the WorldTides API for this. It was fine until I noticed the times were consistently fifteen minutes off from tideschart.com, the source I'd been trusting for years as a swimmer. Fifteen minutes matters when you're timing an ocean dip around a tidal window. So I cut the API, scraped the source I trusted, and let GPT parse it.

The space crew count works the same way. I send the HTML from whoisinspace.com to GPT-4o-mini and ask it to count the humans currently in orbit. This one is less reliable. The model sometimes miscounts, especially when the page lists crew members across multiple vehicles. I've accepted this. If the count is off by one, the briefing still works. And it's a good reminder that these models are probabilistic, not precise. Knowing where your system can afford to be wrong is half the engineering.

The insight here is small but useful: you don't always need an API. When a website is your source of truth, an LLM can parse it with a resilience that CSS selectors can't match. The model understands the meaning of the content, not just its position in the DOM.

Prompt Engineering as Tone Control

The script generation is where the personality lives. GPT-4o-mini writes a 1-2 minute radio script from the assembled data, and the prompt is mostly about what not to do.

No puns. No enthusiasm. No exclamation marks. No “here's something exciting.” The tone is BBC World Service at 6 AM: calm, precise, slightly dry. I spent more time tuning this prompt than writing the actual data pipeline. Getting an LLM to suppress its instinct toward cheerfulness is harder than getting it to be accurate.

The voice is OpenAI's TTS model using the “shimmer” voice, which has the right cadence for a news read. Clean, neutral, no vocal fry.

Graceful Degradation

The one architectural decision I'm proudest of is the failure handling. Every data source is wrapped in a try/except that returns a sensible default. If the weather API is down, you get “weather data unavailable.” If Google Calendar times out, the briefing skips your schedule. If every single source fails simultaneously, you still get a briefing. It'll be short and a bit empty, but it'll be there.

The whole point of a morning briefing is reliability. It runs at 7:00 AM whether I'm awake to check on it or not. If one failure in one source kills the entire pipeline, the system is worse than useless because now I have to build monitoring for my morning alarm clock. Graceful degradation means I trust it and forget about it.

## The Economics of One

The total cost is $0.043 per day. That's GPT-4o for the tide parsing, GPT-4o-mini for the crew count and script writing, and OpenAI TTS for the voice. Every transcript email includes a cost line so I can watch for drift.

$1.29 a month. Less than a coffee. For a personalized radio station that knows my calendar, my beach, and my teams.

Three things that stuck

First, LLMs are underused as parsers. Everyone's building chatbots and agents. Almost nobody is using these models to replace the fragile glue code that holds data pipelines together. A model that understands HTML semantically is more robust than a hundred lines of BeautifulSoup.

Second, prompt engineering is real engineering. The BBC tone didn't happen by accident. It took iteration, failed attempts, and a clear understanding of what the model defaults to when you don't constrain it. The prompt is a specification, and like any spec, precision matters.

Third, the best personal projects are ones you use every morning. RaspberryFM isn't a portfolio piece I built and abandoned. It's running right now. It ran this morning. It'll run tomorrow. That changes how you build it. You optimize for silence over noise, simplicity over cleverness, reliability over features.

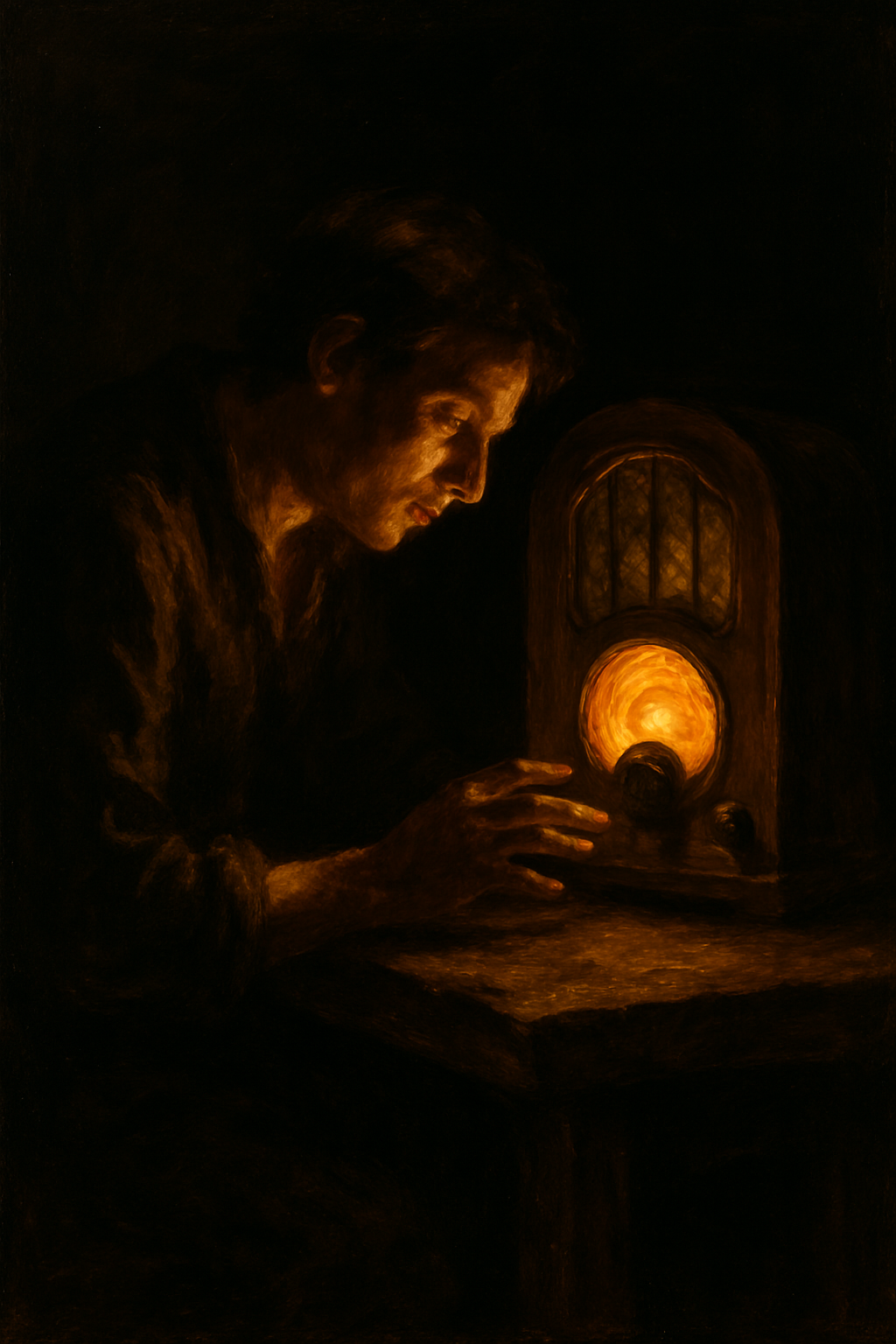

The Pi sits on a shelf in my apartment, a small black box with a green light. Every morning at 7:00 AM it wakes up, looks at the world, and tells me what I need to know. Then it goes back to sleep.